We have a variety of exciting opportunities available right now,

why not check them out…

DELIVERING_AUTONOMY_

Bringing an autonomous revolution to industrial logistics

StreetDrone is deploying the world’s safest remote and autonomous operations. A groundbreaking approach to industrial logistics for operators.

Operators

StreetDrone’s autonomous industrial operations capability will deploy into any non public road logistics operation. Perfect for ports, retail distribution and large scale industrial environments.

Autonomous

Powered by some of the world’s most advanced software, deliveries are going autonomous.

At StreetDrone, we are at the forefront of autonomous technology developments, working with customers to understand how future technologies will influence the supply chain.

Teleoperations

Our teleoperation function means that vehicles can be driven safely by remote operators, taking people out of operational environments and making entire vehicle fleets more efficient.

Vehicles

StreetDrone’s specialist automotive technology division, SD Advanced Engineering, enables the retrofit of vehicle with all of our drive-by-wire technology.

We’re also partnering with Terberg, the market-leading yard tractor manufacture, to ensure that operators have access to the latest in safe autonomous and teleoperated technology.

Autonomous terminal tractors can bring near-term cost and efficiency gains, and have massive advantages over older AGV technology.

CASE_STUDY_

StreetDrone's work at Nissan Sunderland

Automated Driving

V-CAL project will deliver live loads from Vantec to Nissan Motor Manufacturing UK fully autonomously without intervention by a safety driver. Check the new dedicated website for V-CAL.

Autonomous Software

The autonomous functionality is built both by the StreetDrone team and a network of partners which collaborate with Project Aslan, an open-source software designed to accelerate the development of autonomous capability

Simulation

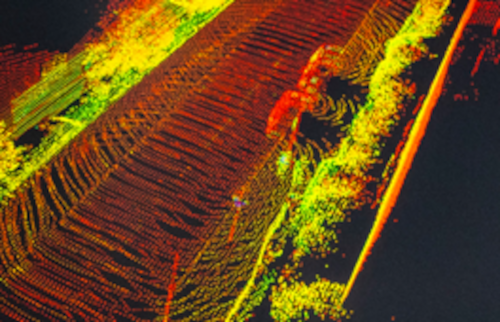

To ensure that the 5G CAL project has safety at its core, all technology developments are tested both in simulation and in physical environments, including on Nissan’s own Sunderland test track.

Integration

The Terberg autonomous truck connects to, or integrates with technology from partners such as Nokia (5G), Hitachi (connected infrastructure and predictive analytics) and Voysys (teleoperation software)

STREETDRONE_FAMILY_

Meet the team

WORKING_TOGETHER_

Technology and project partners